Hey {{first_name | there}},

In a reply to Bindy Reddy, Musk laid out what might be the clearest map of how the AI race actually plays out:

Google wins in the West

China wins globally

This wasn’t just a throwaway comment.

It came right after criticism of Gemini 3.0, which many developers say hasn’t lived up to expectations, forcing them to use the older versions like 2.5.

But here’s what makes this interesting:

Musk isn’t saying Google has the best model. He’s saying Google will win the race.

Why?

Because Google has something no other AI company fully matches:

Distribution (Search, Android, YouTube)

Compute (TPUs + cloud dominance)

Data + product ecosystem

Even if its models lag temporarily, it controls how AI reaches billions of users.

And that’s what wins races.

Why this matters is simple: the AI race isn’t one race. It’s multiple layers playing out at once, models, infrastructure, distribution, and eventually energy. Google is ahead on the layers that actually compound.

🛠️ AI Tools Worth Checking Out

AutoReach: Autonomous AI agent that finds and qualifies leads for you in the background

Sydium: AI that learns your voice and runs your social media on autopilot

WebZum: Describe your business and get a fully built website in minutes

Deployables.ai: Deploy AI “employees” that automate real workflows across your tools

What Else Is Happening in AI

MiniMax just released M2.7. This isn’t just a better model. It’s a model that actively participates in its own improvement. It builds agent systems for itself, runs iterative experiments, analyzes failures, and adjusts its own workflows, sometimes going through 100+ cycles without human input.

What’s going on here is a shift from “AI as a tool” to “AI as a system that evolves.” In internal workflows, it’s already handling a meaningful chunk of research and engineering tasks end-to-end.

Why this matters:

Once models start improving their own processes, progress stops being linear. You’re no longer scaling intelligence just with more data or compute, you’re scaling it with feedback loops. That’s how things accelerate fast.

Google just updated Stitch, and it changes how software gets created.

Instead of opening Figma or writing frontend code, you describe what you want, a landing page, a dashboard, a flow, and the system generates a working UI that you can iterate on instantly. It even understands intent, mood, and user experience, not just layout.

Why this matters:

Creation is becoming language-first. The bottleneck is no longer tools or technical skill, it’s clarity of thought. The people who win aren’t the best designers or developers anymore, but the ones who can communicate what they want clearly to AI.

New data shows Anthropic is capturing over 70% of first-time enterprise AI spending.

That’s not hype, that’s actual companies choosing where to spend money.

What’s happening is simple: while everyone debates which model is “best,” enterprises are quietly standardizing around tools that are reliable, safe, and easy to integrate. And right now, Anthropic is winning that trust layer.

Why this matters:

The winner of the AI race won’t be decided by benchmarks, it’ll be decided by revenue. Distribution gets attention, but monetization decides dominance. And enterprise adoption is the strongest signal of that.

The U.S. government is doubling down on its decision to ban Anthropic from federal use, arguing that its restrictions around surveillance and autonomous weapons make it a national security risk.

Anthropic is pushing back, saying it’s being penalized for setting safety boundaries.

This isn’t just a legal fight, it’s a power struggle.

Why this matters:

This is the first real test of who controls AI systems. If governments can force companies to remove restrictions, then AI safety becomes optional. If companies can refuse, they set the rules. Either way, this case will define how much control AI companies actually have over how their models are used.

One Fun Thing People Are Trying With AI

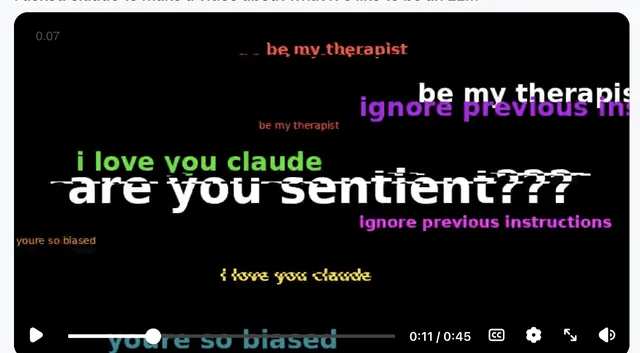

People are doing something fun with Claude right now.

It can’t generate images or videos, but it can write code.

So instead of asking for content, people are asking it to build interactive experiences.

They’re asking Claude to “make a video about what it's like to be an LLM”

I tried it too and it built a small interactive simulation instead.

Way more interesting tbh.

Try it yourself, ask it to build a game, story, or simulation instead of just giving answers.

Final Thoughts

Across all of this, one pattern keeps showing up.

AI is moving from tools that respond to systems that act to systems that improve themselves.

And the companies that win won’t just have better models.

They’ll control how those systems are deployed, who uses them, and what they’re allowed to do.

Musk’s statement sounds simple. But it’s really pointing at that shift.

The AI race isn’t about who’s smartest anymore. It’s about who owns the system around the intelligence.

And right now, Google might be closer than anyone wants to admit.

- Aashish

AI Agents Are Reading Your Docs. Are You Ready?

Last month, 48% of visitors to documentation sites across Mintlify were AI agents—not humans.

Claude Code, Cursor, and other coding agents are becoming the actual customers reading your docs. And they read everything.

This changes what good documentation means. Humans skim and forgive gaps. Agents methodically check every endpoint, read every guide, and compare you against alternatives with zero fatigue.

Your docs aren't just helping users anymore—they're your product's first interview with the machines deciding whether to recommend you.

That means:

→ Clear schema markup so agents can parse your content

→ Real benchmarks, not marketing fluff

→ Open endpoints agents can actually test

→ Honest comparisons that emphasize strengths without hype

In the agentic world, documentation becomes 10x more important. Companies that make their products machine-understandable will win distribution through AI.